by Benjamin Lallement, DevOps and member of the collective Gologic.

Goals Series

This article series aims to explore the different tools for doing pipeline as code and deployment.

The goal for each article remains the same: checkout GIT source code, compile a JAVA project with Maven, run the tests then deploy the application on AWS BeanStalk.

Those steps will be written as code in a pipeline and executed with a CI/CD tools.

Each article is divided into several parts:

- Installation and startup of a CI/CD tool

- Configuration CI/CD tool (if needed)

- Code continuous deployment pipeline

- Check deployment

- A simple conclusion

If you want to run pipeline, you will need:

- Docker runtime to execute pipeline steps.

- An AWS BeanStalk environment with access key and secret to deploy the application.

Before starting, let’s define two key concepts: continuous deployment and pipeline as code.

What does “Continuous Deployment” mean?

Continuous deployment is closely related to continuous integration and refers to the release into production of software that passes the automated tests.

“Essentially, it is the practice of releasing every good build to users”, explains Jez Humble, author of Continuous Delivery.

By adopting both continuous integration and continuous deployment, you not only reduce risks and catch bugs quickly, but also move rapidly to working software.

With low-risk releases, you can quickly adapt to business requirements and user needs. This allows for greater collaboration between ops and delivery, fueling real change in your organization, and turning your release process into a business advantage.

What does “Pipeline as Code” mean?

Teams are pushing for automation across their environments(testing), including their development infrastructure.

Pipelines as code is defining the deployment pipeline through code instead of configuring a running CI/CD tool.

Source code

GitHub demo reference is there: Continuous Deployment Demo

Azure DevOps

Goal

Our sixth competitor is none other than Microsoft and its platform Azure DevOps .

You will find the other articles by clicking here: #1-Jenkins, #2-Concourse, #3-GitLab, #4-CircleCI, #5-TravisCI.

Azure DevOps is Microsoft’s platform replacing previous platforms like VSTS, TFS to perform continuous integration. Azure DevOps provides a platform that contains all the key features of development: source code repository, pipeline, test planning, and a task manager.

In this article, we’ll look at the Pipeline part: Azure Pipelines!

With Microsoft’s shift to the OpenSource world, Azure DevOps is also taking advantage of this shift, enabling pipelines to connect to all known tools on the market and compiling all types of applications such as .Net, Java, Python or NodeJS.

Azure Pipeline provides two-way pipeline editing: snippets to the pipeline-as-code, pipeline-as-code to snippets. These two types of edition makes it easier to explore the different possibilities and allows for flexibility by editing the pipeline with code.

By default, the most common snippets are offered (compile, script, bash, powershell, publish tests, publish artifacts, etc …). Additional snippets are available as a catalog in the Azure DevOps Marketplace (https://marketplace.visualstudio.com/azuredevops), adding a wealth of tools and features.

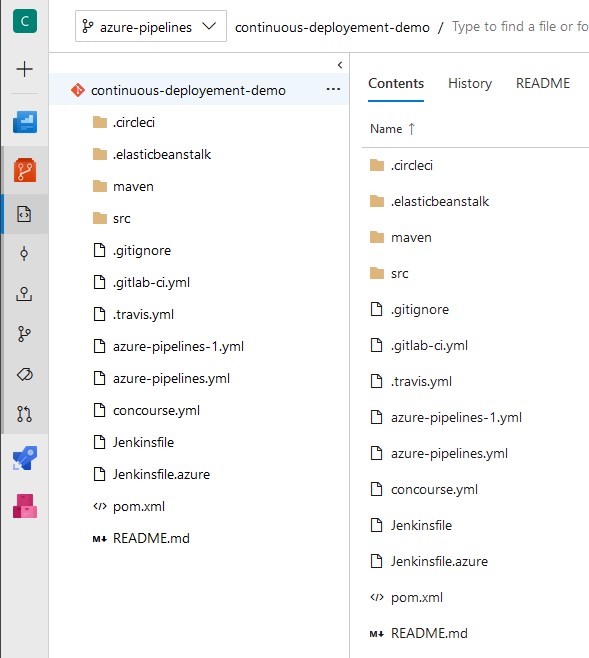

The pipeline function of Azure DevOps works as a descriptor in YAML format. The configuration is stored in the project in an azure-pipelines.yml file.

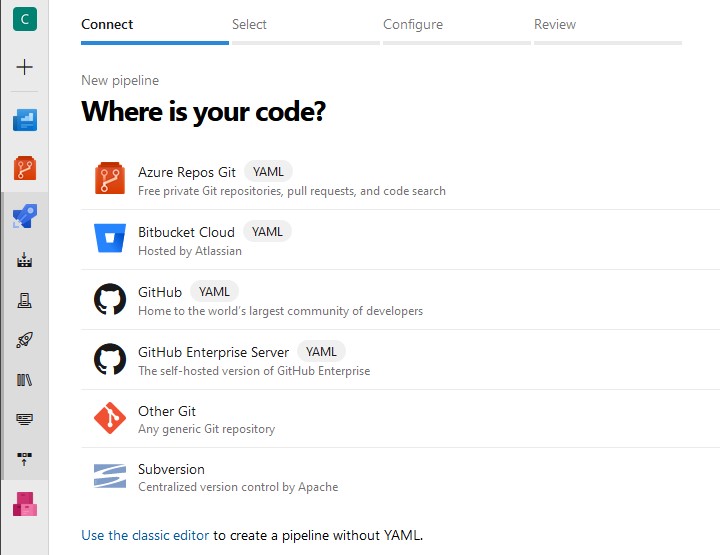

Azure DevOps Pipeline integrates by default with Azure DevOps source code repositories (our case). It is still possible to host its source code at another host accessible from outside. The platform is only available in SaaS mode, it is impossible to access a private depot without giving access from outside. If the source code is changed, the pipeline is triggered by a change in the sources or the pipeline itself.

At the framework level, Azure DevOps is similar to the other tools: a pipeline is composed of `JOB`, grouping executions of tasks in` STEPS`. For sequences, a `JOB` declares dependencies, conditions as well as parallelism between the tasks. A ` JOB ` runs on an Agent according to the VM Pool available in Azure and can execute all the tasks in a Docker container.

The workspace is common for all tasks in a `JOB` to simplify file exchanges (tests, artifacts, etc.). Then the data passing between “ JOB `is done by publishing to an Artefact repository.

Azure DevOps makes a difference compared to other CI / CD tools, the notion of ` Deployment JOB ` and ` Environment `. A ` Deployment JOB ` aims at executing deployment tasks on an environment according to strategies: RunOnce, Blue-Green or Canary. This feature is missing in all other CI / CD tools and often requires the installation of a deployment manager such as Harness or Spinnaker < / em> to support deployment strategies.

It should be noted that Azure DevOps is still in full development and many features are still being worked or non-functional.

Azure DevOps is available in SaaS with the Microsoft / Azure platform and does not exist in On-Premise.

Configure an Azure DevOps Pipeline Project

Pipeline creation

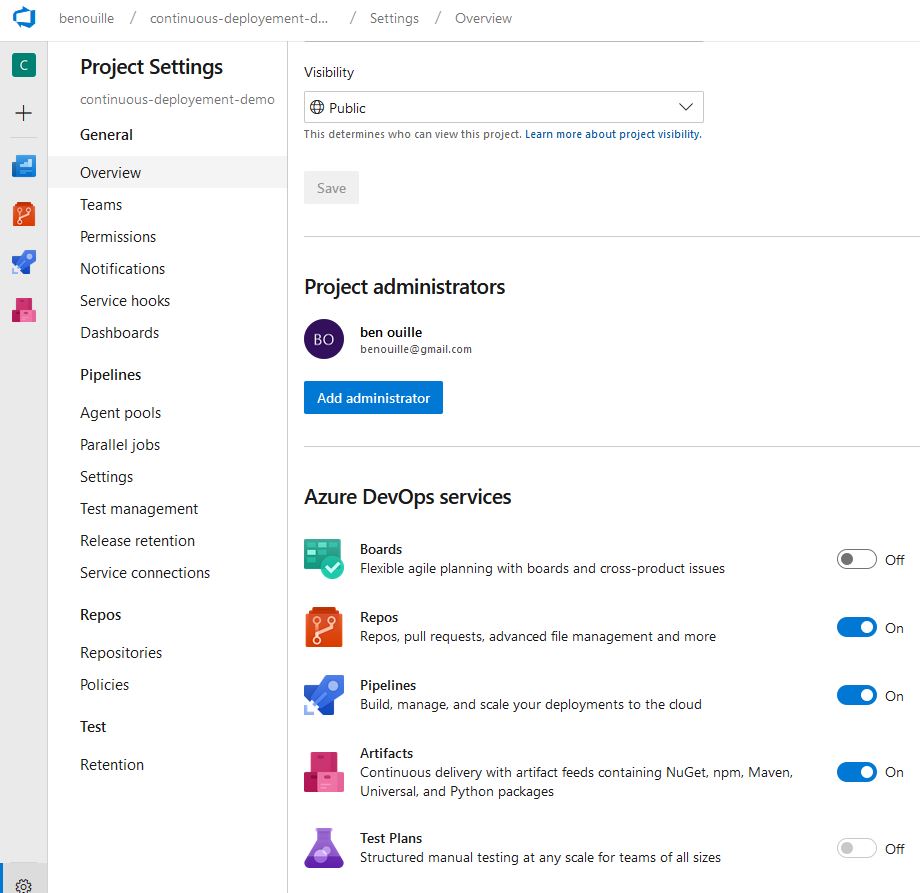

An Azure account is required to log in to the Azure DevOps platform , then create a project and drop the code into the project repository.

Project configuration

Adding source code in the repository

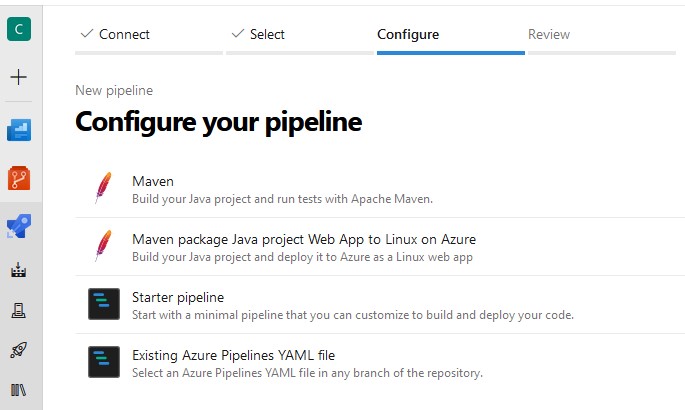

Creating the pipeline from an Azure Repos Git repository

This step adds an azure-pipelines.yml file to the root of your repository and each time the backup runs!

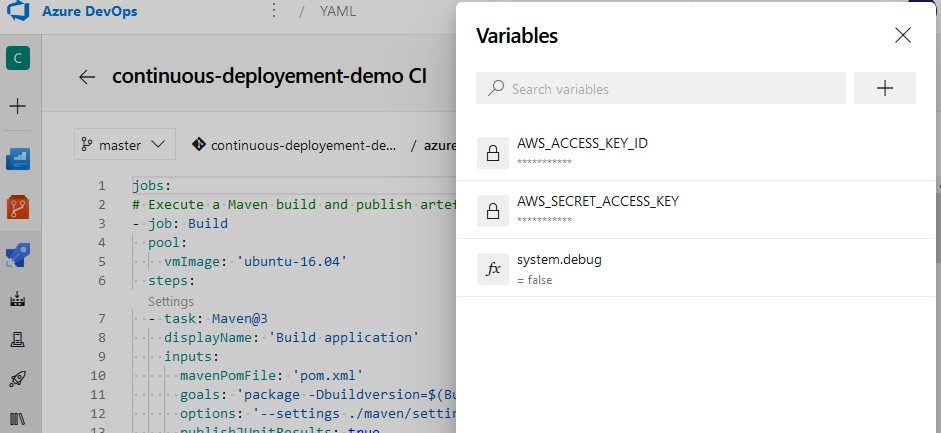

Management of environment variables

In order to deploy the application in AWS, it is necessary to add in the environment variables of the project, the credential configuration to AWS. In the pipeline edition, select the `Variables` menu and add the keys AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY with your configurations to AWS, ticking` Keep this value secret` to not never transmit the values in the logs.

These variables are now available as environment variables in all project tasks!

Now that the pipeline is configured, let’s go to the pipeline configuration step!

Pipeline as code: let’s get started

Azure DevOps uses pipelines in declarative YAML form unlike scripting pipelines (see Jenkinsfile).

The pipeline encoded in this article contains two `JOB`,` Build` and `Deploy`:

- Build: Raises a Maven build, publishes test results, and publishes an artifact to an internal repository in the pipeline.

- Deploy: If the Build is successful, download the artifact and perform a deployment in AWS with the Docker image chriscamicas / awscli-awsebcli.

The complete example is detailed below, just replace your .azure-pipelines.yml pipeline with the following content:

jobs:

# Execute a Maven build and publish artefact

- job: Build

pool:

vmImage: 'ubuntu-16.04'

steps:

- task: Maven@3

displayName: 'Build application'

inputs:

mavenPomFile: 'pom.xml'

goals: 'package'

publishJUnitResults: true

testResultsFiles: '**/surefire-reports/TEST-*.xml'

javaHomeOption: 'JDKVersion'

jdkVersionOption: '1.8'

mavenVersionOption: 'Default'

mavenOptions: '-Xmx3072m'

mavenAuthenticateFeed: false

effectivePomSkip: false

sonarQubeRunAnalysis: false

- task: PublishTestResults@2

displayName: 'Publish tests results'

inputs:

testResultsFormat: 'JUnit'

testResultsFiles: '**/surefire-reports/TEST-*.xml'

mergeTestResults: true

- task: PublishBuildArtifacts@1

displayName: 'Publish application'

inputs:

pathtoPublish: '$(System.DefaultWorkingDirectory)'

artifactName: continuous-deployment-demo

# download the artifact and deploy it only if the build job succeeded

- job: Deploy

pool:

vmImage: 'ubuntu-16.04'

dependsOn: Build

condition: succeeded()

container: chriscamicas/awscli-awsebcli:latest

steps:

- checkout: none #skip checking out the default repository resource

- task: DownloadBuildArtifacts@0

displayName: 'Download Build Artifacts'

inputs:

artifactName: continuous-deployment-demo

downloadPath: $(System.DefaultWorkingDirectory)

- script: |

printenv

ls -ltra

echo "Check AWS_ACCESS_KEY_ID=$(AWS_ACCESS_KEY_ID)"

eb init continuous-deployment-demo -p "64bit Amazon Linux 2017.09 v2.6.4 running Java 8" --region "ca-central-1"

eb create azuredevops-env --single || true;

eb use azuredevops-env

eb setenv SERVER_PORT=5000

eb deploy

eb status

eb health

workingDirectory: $(System.DefaultWorkingDirectory)/continuous-deployment-demo

displayName: "Provision environment and deploy application"

As soon as the .azure-pipelines.yml file is added to the project, the Pipelines menu displays the pipeline run and logs to see the progress of the steps in the project. pipeline.

Conclusion

Azure DevOps offers a solution all includes really effective to manage the development cycle of an application.

It provides easy editing of the pipeline through interactive mode and code mode.

It integrates a lot of “built-in” tasks and offers a catalog of impressive add-ons.

It is available only in “Cloud” mode that can block an integration with “on-premises” repositories.

There is many ongoing development like deployment jobs.